An Update on Packagist.org Hosting

As we announced a bit over a week ago, we recently did some heavy server maintenance on the packagist.org website. I wanted to share some more details about the current infrastructure behind the website and how we got there.

As we announced a bit over a week ago, we recently did some heavy server maintenance on the packagist.org website. I wanted to share some more details about the current infrastructure behind the website and how we got there.

Early days

From the beginning of the project in summer 2011, until April 2015, the site was hosted on a small server of mine which had a few other things running on it. All was running pretty well given the relatively low traffic we were serving back then. There were a few times where we had a couple hours of downtime but nothing dramatic that I can recall.

In April 2015, due to growing traffic the limited bandwidth (100mbps if I recall correctly, almost all of it used up by JSON as the zip files with PHP code are served by GitHub & other package sources, we only host the metadata) of that machine was being stretched, and people complained more and more of requests failing randomly. I migrated everything to a (rented) dedicated server with 8 cores, 64GB RAM and 500mbps of bandwidth. This gave us a lot of breathing room, but it was still a single machine. Hardly an ideal situation for a service a large chunk of the PHP community was becoming increasingly reliant on.

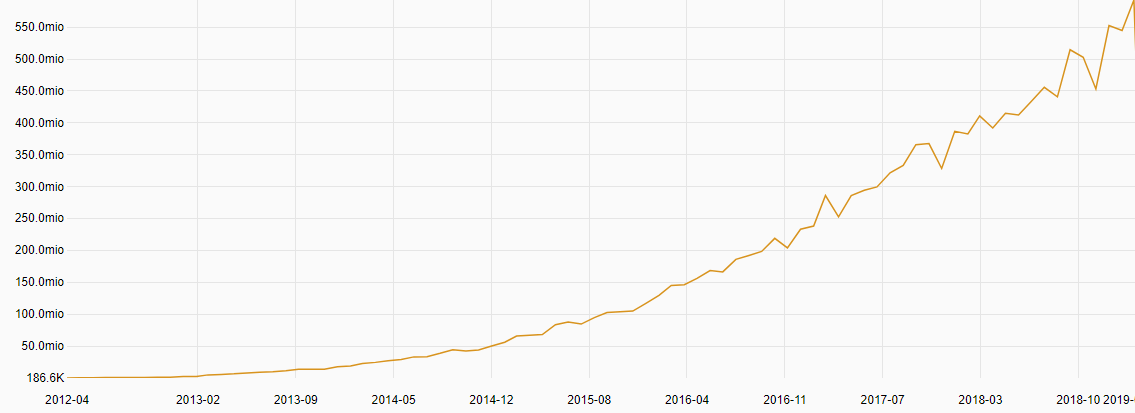

Looking at the install stats historically, in April 2015 we were doing ~67 million package installs per month, while March 2019 saw 592 million installs, an almost 9x increase in 4 years.

Due to constraints in budget and time (back then it was all paid out of pocket and more importantly built during unpaid time outside of work hours), this single server solution stayed the same for way longer than I would have liked. There were off-site backups in case of critical failure, but thankfully we never needed them.

I believe the main issue we encountered was in late 2017, due to a hard reboot of the machine. At the time all the package download stats were stored exclusively in Redis and it had grown to be quite a large data-set. Reloading this from disk into memory took ages and during that time the machine was struggling with other tasks. This hardly affected Composer operations though given the fairly resilient design we have there.

Separating the repository from the website

The way packagist.org works in a nutshell is that as GitHub & co notify us of changes to git repos, the app updates the package metadata, which is then dumped to disk as a simple json file. As all of it is open source there is no need for authentication or anything more complex really, which means most requests we serve are handled directly by nginx reading a static json file from disk.

Running mirrors is therefore fairly simple as we can keep the files in sync and if the origin server is temporarily unavailable it merely stops the sync and package updates. Composer updates keep working - at worst you get slightly stale data. Composer installs from composer.lock files never contact packagist.org for package metadata anyway so those are safe no matter what.

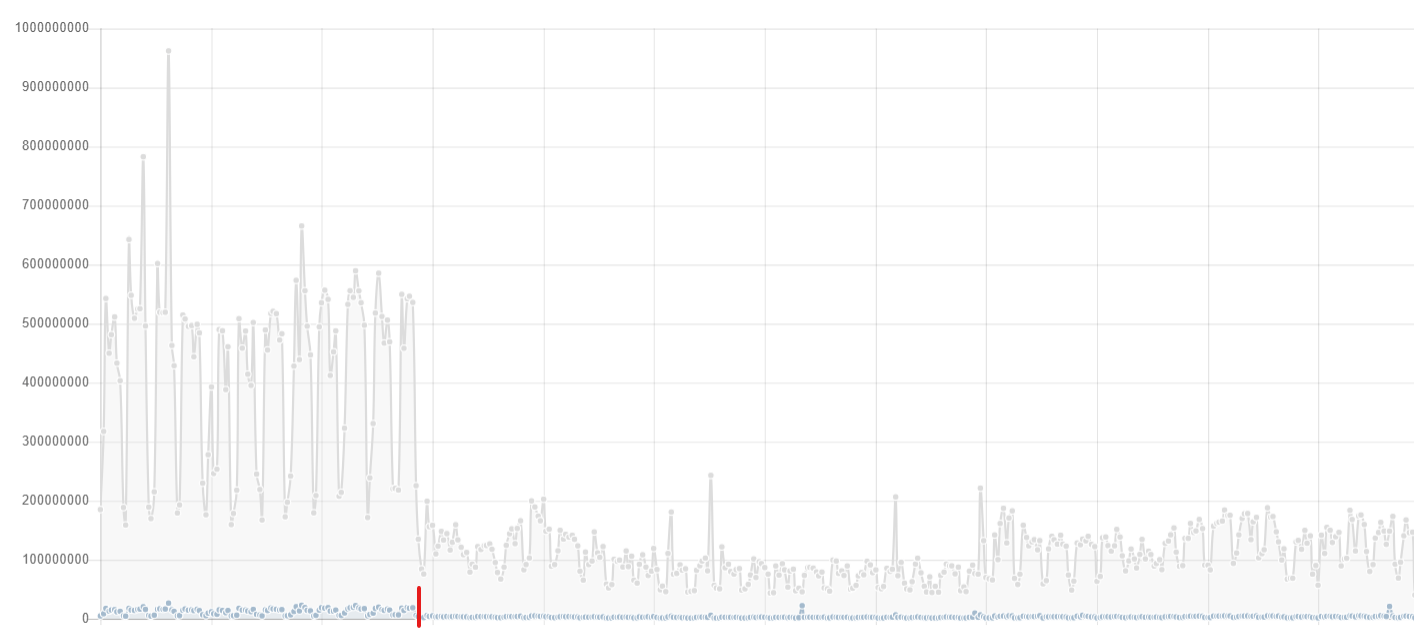

In 2017 (I think..), the 500mbps on that single machine were being stretched again, so we decided to add a mirror of the repository data. This helped for a while, until it reached the limit again around June 2018. At that point we switched to a set of several new mirrors (in Canada, Europe and Singapore), and changed Composer so it started addressing the package repository at repo.packagist.org instead of packagist.org. This allowed us to route traffic using DNS more easily.

The graph above shows the traffic stats for that main dedicated server that was still solely responsible for hosting the website. The red mark is when we switched to repo.packagist.org and all the new mirrors started being used.

Moving to the cloud

After almost 4 years, the website was still happily hosted on that single machine, with all the associated risk this carried. The server was still on Ubuntu 14.04 and with an uptime of 440 days as the last hard reboot wasn't a great experience. It was time to get a more reliable solution in place, for the sake of our users (and my ability to sleep well ;)).

In the meantime Private Packagist has given us us the financial freedom to work more on Composer and Packagist, as well as providing us with a real budget for hosting. It also brought us more experience hosting on AWS - we launched Private Packagist in late 2016 and have not had a single severe service interruption yet.

We decided to migrate the packagist.org website to AWS as well, including the database, metadata update workers, etc. This makes it much easier to build a highly-available setup, where machines can be rebooted or even rebuilt safely without bringing down the whole site.

This brings us in a good position to keep scaling the service as ever more people are coming to use it (the Magento and Drupal communities are in the process of migrating to Composer).

The next big step will be finalizing Composer 2.0 changes, bringing an improved experience on the client side of the Composer world!